WARNING: Do not try this at home. Call VMware Support! Only those who are lucky, brave and immortal you may consider continuing reading this post. Don't forget to take offline snapshots of your SDDC Manager appliance before you do anything.

Background

When expanding such a cluster by adding newly prepared hosts, you use the POST /v1/clusters/{clusterId}/expand API endpoint. Broadcom's official documentation describes the required JSON spec, but as we discovered, it's missing a critical field that will cause the operation to fail every time in certain environments.

Environment

- VCF Version: 5.2.2.0 Build 24936865

- Cluster type: vSAN Stretched Cluster across two AZs

- AZ1 hosts: Management VLAN 100, Network Pool CORP-AZ1

- AZ2 hosts: Management VLAN 200, Network Pool CORP-AZ2

- Fault domains: CORP-CLUSTER01_primary-az-faultdomain (AZ1) and CORP-CLUSTER01_secondary-az-faultdomain (AZ2)

- Goal: Add 2 hosts to each fault domain (4 hosts total)

What the documentation says

{

"clusterExpansionSpec": {

"hostSpecs": [ {

"id": "ESXi host 1 ID",

"licenseKey": "XXXXX-XXXXX-XXXXX-XXXXX-XXXXX",

"azName":"primary/secondary",

"hostNetworkSpec": {

"vmNics": [{

"id": "vmnic0",

"vdsName": "<vSphere Distributed Switch 1>"

},

{

"id": "vmnic1",

"vdsName": "<vSphere Distributed Switch 2>"

}

]

}

}

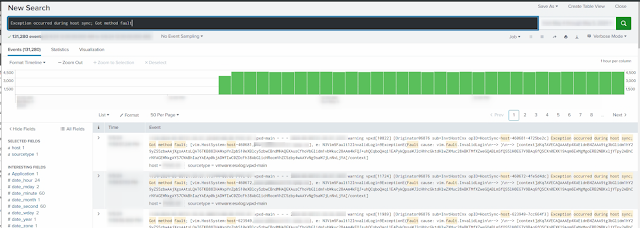

...The error

HOST_NETWORK_VALIDATION_FAILED

Host corpesxi01.corp.local VlanId 100 is not same as availability zones VlanId 200

ERROR Host corpesxi01.corp.local VlanId 100 is not same as availability zones VlanId 200

DEBUG Getting service credential with entity ID <AZ2-host-uuid>

INFO Host params: ip: 10.10.200.11, username: svc-vcf-corpesxi03

DEBUG Connecting to https://corpesxi03.corp.local:443/sdk

Solution: Add networkPoolId to hostNetworkSpec

$headers = @{ Authorization = "Bearer $token" }

$pools = Invoke-RestMethod -Method Get `

-Uri "https://sddc-manager.corp.local/v1/network-pools" `

-Headers $headers

$pools.elements | ForEach-Object {

Write-Host "Pool: $($_.name) ID: $($_.id)"

}

Pool: CORP-AZ1 ID: aaaaaaaa-1111-2222-3333-bbbbbbbbbbbb

Pool: CORP-AZ2 ID: cccccccc-4444-5555-6666-dddddddddddd

Invoke-RestMethod -Method Get `

-Uri "https://sddc-manager.corp.local/v1/hosts?status=UNASSIGNED_USEABLE" `

-Headers $headers |

Select-Object -ExpandProperty elements |

Select-Object id, fqdn,

@{N="vlan"; E={($_.networks | Where-Object {$_.type -eq "MANAGEMENT"}).vlanId}},

@{N="pool"; E={$_.networkpool.name}} |

Format-Table -AutoSize

id fqdn vlan pool

-- ---- ---- ----

aaaaaaaa-0001-0001-0001-000000000001 corpesxi05.corp.local 100 CORP-AZ1

aaaaaaaa-0001-0001-0001-000000000002 corpesxi06.corp.local 100 CORP-AZ1

aaaaaaaa-0001-0001-0001-000000000003 corpesxi07.corp.local 200 CORP-AZ2

aaaaaaaa-0001-0001-0001-000000000004 corpesxi08.corp.local 200 CORP-AZ2

{

"clusterExpansionSpec": {

"hostSpecs": [

{

"id": "aaaaaaaa-0001-0001-0001-000000000001",

"licenseKey": "XXXXX-XXXXX-XXXXX-XXXXX-XXXXX",

"azName": "CORP-CLUSTER01_primary-az-faultdomain",

"hostNetworkSpec": {

"networkPoolId": "aaaaaaaa-1111-2222-3333-bbbbbbbbbbbb",

"vmNics": [

{ "id": "vmnic0", "vdsName": "CORP-VDS001" },

{ "id": "vmnic2", "vdsName": "CORP-VDS001" }

]

}

},

{

"id": "aaaaaaaa-0001-0001-0001-000000000002",

"licenseKey": "XXXXX-XXXXX-XXXXX-XXXXX-XXXXX",

"azName": "CORP-CLUSTER01_primary-az-faultdomain",

"hostNetworkSpec": {

"networkPoolId": "aaaaaaaa-1111-2222-3333-bbbbbbbbbbbb",

"vmNics": [

{ "id": "vmnic0", "vdsName": "CORP-VDS001" },

{ "id": "vmnic2", "vdsName": "CORP-VDS001" }

]

}

},

{

"id": "aaaaaaaa-0001-0001-0001-000000000003",

"licenseKey": "XXXXX-XXXXX-XXXXX-XXXXX-XXXXX",

"azName": "CORP-CLUSTER01_secondary-az-faultdomain",

"hostNetworkSpec": {

"networkPoolId": "cccccccc-4444-5555-6666-dddddddddddd",

"vmNics": [

{ "id": "vmnic0", "vdsName": "CORP-VDS001" },

{ "id": "vmnic2", "vdsName": "CORP-VDS001" }

]

}

},

{

"id": "aaaaaaaa-0001-0001-0001-000000000004",

"licenseKey": "XXXXX-XXXXX-XXXXX-XXXXX-XXXXX",

"azName": "CORP-CLUSTER01_secondary-az-faultdomain",

"hostNetworkSpec": {

"networkPoolId": "cccccccc-4444-5555-6666-dddddddddddd",

"vmNics": [

{ "id": "vmnic0", "vdsName": "CORP-VDS001" },

{ "id": "vmnic2", "vdsName": "CORP-VDS001" }

]

}

}

]

}

}Key takeaway

| Host VLAN | Network Pool | azName |

networkPoolId |

|---|---|---|---|

| VLAN 100 | CORP-AZ1 | primary-az-faultdomain |

AZ1 pool UUID |

| VLAN 200 | CORP-AZ2 | secondary-az-faultdomain |

AZ2 pool UUID |

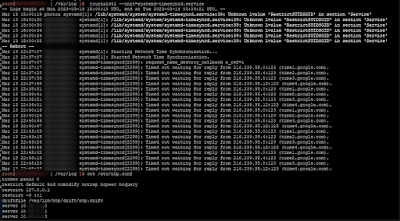

Cleaning up stuck tasks before trying again

#!/bin/bash

# vcf-cleanup-stuck-tasks.sh

# Cleans up INITIALIZED (stuck) processing_task records in VCF domainmanager DB

# Usage: ./vcf-cleanup-stuck-tasks.sh [execution_id]

# If no execution_id is provided, cleans up the latest EXPAND_STRETCHED_CLUSTER run

set -euo pipefail

PGHOST="localhost"

PGUSER="postgres"

PGDB="domainmanager"

PSQL="psql -h $PGHOST -U $PGUSER -d $PGDB -t -A"

RED='\033[0;31m'

GREEN='\033[0;32m'

YELLOW='\033[1;33m'

CYAN='\033[0;36m'

NC='\033[0m'

log() { echo -e "${CYAN}[INFO]${NC} $*"; }

ok() { echo -e "${GREEN}[OK]${NC} $*"; }

warn() { echo -e "${YELLOW}[WARN]${NC} $*"; }

err() { echo -e "${RED}[ERROR]${NC} $*"; exit 1; }

# ── Resolve execution ID ──────────────────────────────────────────────────────

if [[ $# -ge 1 ]]; then

EXEC_ID="$1"

log "Using provided execution ID: $EXEC_ID"

else

log "No execution ID provided — looking up latest EXPAND_STRETCHED_CLUSTER..."

EXEC_ID=$($PSQL -c "

SELECT id FROM execution

WHERE name = 'EXPAND_STRETCHED_CLUSTER'

ORDER BY start_time DESC

LIMIT 1;

" 2>/dev/null | head -1)

[[ -z "$EXEC_ID" ]] && err "No EXPAND_STRETCHED_CLUSTER execution found in DB."

log "Found latest execution: $EXEC_ID"

fi

# ── Verify execution exists and is in a terminal state ───────────────────────

EXEC_STATUS=$($PSQL -c "

SELECT execution_status FROM execution WHERE id = '$EXEC_ID';

" 2>/dev/null | head -1)

[[ -z "$EXEC_STATUS" ]] && err "Execution ID '$EXEC_ID' not found in database."

if [[ "$EXEC_STATUS" == "IN_PROGRESS" ]]; then

err "Execution $EXEC_ID is still IN_PROGRESS — will not cancel subtasks of a running workflow."

fi

log "Execution status: $EXEC_STATUS"

# ── Show current task status breakdown ───────────────────────────────────────

echo ""

log "Current processing_task status breakdown:"

$PSQL -c "

SELECT status, COUNT(*) as count

FROM processing_task

WHERE execution_id = '$EXEC_ID'

GROUP BY status

ORDER BY count DESC;

" 2>/dev/null | column -t -s '|'

echo ""

# ── Count INITIALIZED tasks ───────────────────────────────────────────────────

INIT_COUNT=$($PSQL -c "

SELECT COUNT(*) FROM processing_task

WHERE execution_id = '$EXEC_ID'

AND status = 'INITIALIZED';

" 2>/dev/null | head -1)

if [[ "$INIT_COUNT" -eq 0 ]]; then

ok "No INITIALIZED tasks found — nothing to clean up."

exit 0

fi

warn "Found $INIT_COUNT INITIALIZED (stuck) tasks for execution $EXEC_ID"

# ── Confirm ───────────────────────────────────────────────────────────────────

read -r -p "$(echo -e "${YELLOW}Cancel these $INIT_COUNT tasks and restart domainmanager? [y/N]:${NC} ")" CONFIRM

[[ "${CONFIRM,,}" != "y" ]] && { warn "Aborted by user."; exit 0; }

# ── Cancel stuck tasks ────────────────────────────────────────────────────────

log "Cancelling $INIT_COUNT INITIALIZED tasks..."

UPDATED=$($PSQL -c "

UPDATE processing_task

SET status = 'CANCELLED'

WHERE execution_id = '$EXEC_ID'

AND status = 'INITIALIZED';

" 2>/dev/null | grep -oP '\d+' | head -1 || echo "0")

ok "Cancelled $UPDATED tasks."

# ── Verify ────────────────────────────────────────────────────────────────────

echo ""

log "Updated status breakdown:"

$PSQL -c "

SELECT status, COUNT(*) as count

FROM processing_task

WHERE execution_id = '$EXEC_ID'

GROUP BY status

ORDER BY count DESC;

" 2>/dev/null | column -t -s '|'

echo ""

# ── Restart domainmanager ─────────────────────────────────────────────────────

log "Restarting domainmanager.service..."

systemctl restart domainmanager.service

log "Waiting 30 seconds for service to come up..."

sleep 30

STATUS=$(systemctl is-active domainmanager.service)

if [[ "$STATUS" == "active" ]]; then

ok "domainmanager.service is running."

else

err "domainmanager.service failed to start (status: $STATUS). Check: journalctl -u domainmanager.service -n 50"

fi

echo ""

ok "Cleanup complete. Verify the SDDC Manager UI task list is clear before resubmitting."